How We Measure the Digital World

We’re on a quest to map every corner of the digital world. For over 10 years, we’ve pioneered a unique, multidimensional approach to measuring online traffic and discovering what it means.

10B

Digital Signals Collected Daily

2TB

Data Analyzed Daily

200

Data Scientists

10K+

Traffic Reports Generated Daily

Great Data, And Lots of it

It’s all about the blend. Our unique mix of digital signals obtained from a variety of unique sources allows us to measure and map the digital world in a timely and comprehensive way.

Website & App Owners

Data directly measured through first party analytics (e.g. Google Analytics) of millions of websites and apps.

Contributors Network

Anonymous traffic data collected from Similarweb products installed on millions of devices, worldwide

Public Data

Publicly available data (e.g. Wikipedia, census data etc.) algorithmically captured and indexed from millions of websites and apps

Partnerships

Rich data pre-analyzed by global partners like DPSs, ISPs, measurement companies and corporate intelligence firms

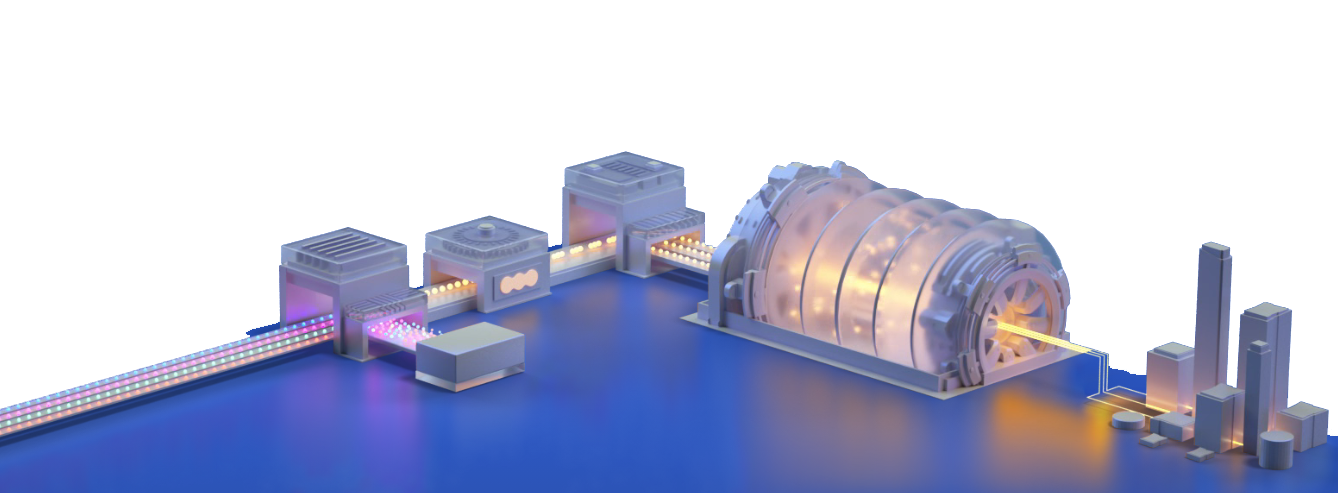

Making the Web Similar

Our unique data science converts noisy online data into a clear signal, normalizing all digital sources into a single view. That’s why we can fairly compare sites to sites and apps to apps.

With Great Power Comes Great Visibility

We extrapolate the tens of billions of digital signals gathered each day into an ultra high-resolution picture of everything happening online. Fueled by AI algorithms perfected by a team of over 200 top data scientists and 50 PhDs. Curious what that kind of power feels like?

Deep Market Insight... At Your Fingertips

Follow the traffic and get the most objective, unbiased view of the digital world. From tactical, day-to-day execution to long-term digital strategy, all your decisions can now be driven by the most important data there is - reality.