Answer Engine Optimization (AEO): What It Is, How It Works, and How to Get in the Answer

Here is a number worth thinking about: 78%.

That is the average zero-click rate for “answer engine optimization” on Google, based on Similarweb keyword data from December 2025 through February 2026 (3-month average, worldwide).

Nearly eight in every ten people searching for this exact topic get their answer directly on the results page, without clicking through to any website.

I find this almost poetic.

The primary keyword for an article about getting cited in AI answers is itself a near-perfect live demonstration of why AEO exists as a discipline. You are reading this because you searched for something, but 78% of people who searched the same query got their answer before they got here.

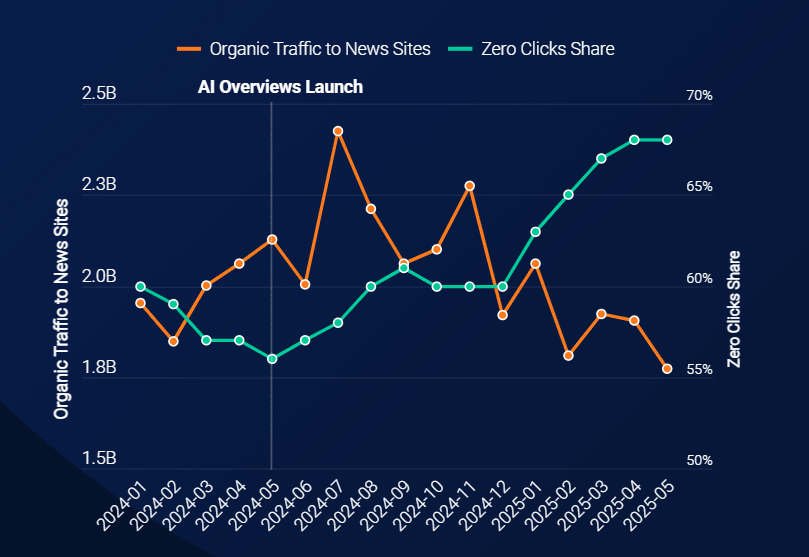

The shift was already underway before AI Overviews became standard: Similarweb data shows that zero-click searches for news-related queries jumped from 56% to 69% between May 2024 and May 2025. The trend below made the trajectory pretty clear:

Then, Pew Research Center added empirical weight in July 2025: analyzing the browsing behavior of 900 U.S. adults, they found that users clicked on a traditional search result just 8% of the time when an AI summary appeared, compared to 15% when it did not.

That is a 47% reduction in click-through rate.

Clicks on the sources cited within the AI summary itself: 1%.

Multiple independent analyses in 2025 reached consistent findings in the same direction. This is not a traffic decline you can reverse by publishing more content or fixing your title tags. It is a structural change in how information is consumed.

Users are getting answers. They are just not getting them from your website.

Answer engine optimization is the discipline that addresses this directly. Instead of optimizing to get users to click through to your site, AEO structures content so that AI systems can extract it, attribute it, and deliver it as the answer itself. You stop chasing clicks. You start competing to be the cited source.

This guide covers the full AEO picture: what it is, how AI systems actually select content to cite, how AEO relates to SEO and GEO, the FIFI framework applied to real brand data, the tools that make AEO measurable, and how to track performance once the strategy is in motion.

What is answer engine optimization?

Answer engine optimization (AEO) is the discipline of making your brand the most reliable, easy-to-quote source for the questions your customers ask across AI-powered systems. The goal is to be named and cited as the answer, and not just to rank near it.

This spans Google AI Overviews, People Also Ask boxes, featured snippets, voice assistants, and AI chat platforms. Wherever a user asks a question and receives a synthesized response, AEO is the discipline that determines whether your brand is in that response.

Specifically, AEO involves three sequential outcomes:

- Being retrieved when an AI system searches for source material.

- Being trusted enough to be selected as a primary source.

- Being cited with your brand name (and ideally your URL) in the final answer.

Miss any of these steps and you are present in the information ecosystem but invisible in the answer.

From a lineage perspective, AEO evolved from featured snippet optimization and semantic SEO, extended to cover the full ecosystem of AI answer surfaces. It sits between traditional SEO and full GEO: SEO ensures your pages are indexed and authoritative, AEO structures them so AI systems extract and attribute them as answers, and GEO extends the scope further to maximize share of voice and brand influence across the entire generative AI landscape.

In practice, AEO can be understood as a focused, answer-oriented subset of GEO. The optimization fundamentals overlap, but the scope and metrics diverge.

The zero-click brand economy: why AEO now

Traditional SEO operates on a click economy: your content ranks, a user sees it, a percentage click through, and you earn the visit. AEO operates on a zero-click brand economy: your brand name is delivered inside the answer itself, whether or not a click follows. The user has encountered your brand. That encounter shapes consideration, even invisibly.

The scale of this shift is measurable. Similarweb data showed that zero-click search rates have risen from 56% to 69% since the launch of AI Overviews. Seer Interactive’s analysis of 3,119 informational queries across 42 organizations found that when AI Overviews appear, organic CTR dropped 61% for brands not cited in the Overview.

For brands that were cited, the same queries drove 35% more organic clicks and 91% more paid clicks compared to non-cited competitors. Being named in the answer does not eliminate clicks, it concentrates them toward brands that earned the attribution.

The stakes extend beyond individual query CTR. Similarweb’s 2026 AI Brand Visibility Report found that 35% of consumers consider AI tools most useful during the initial discovery phase, compared to 13.6% for search engines at the same stage.

Brand consideration is forming in AI-generated answers before most brands realize the purchase journey has started. By the time the user reaches “finding where to buy,” AI and search are nearly level at 24.3% vs 22.1%.

Miss the AEO layer and you are competing for a customer whose consideration set is already closed.

What AEO is not

AEO is not a replacement for SEO. Your content still needs to be indexed, crawlable, and authoritative enough for AI systems to trust it. SEO is the prerequisite. AEO is the optimization layer that determines what happens once you are eligible to be selected as the answer.

AEO is not identical to GEO, though the two share significant overlap. The practical distinction is one of scope and depth.

AEO focuses on winning the answer: being named and cited in response to a specific question, across both SERP-level features and AI chat platforms. GEO extends further to optimize brand share of voice, narrative control, and domain influence across the full generative AI ecosystem, including prompts that never touch a traditional search engine.

AEO is where most brands should start. GEO is where the strategy matures.

AEO is also not purely a content discipline. Schema markup (FAQPage, HowTo, Speakable), page structure, heading hierarchy, and technical crawlability all contribute to whether AI systems can extract your content cleanly.

A well-written answer buried in poor HTML structure will lose the extraction to a structurally cleaner competitor with a less elegant answer. Technical SEO and content work are both required.

How do answer engines select content to cite?

Answer engines select content through retrieval-augmented generation (RAG): the AI interprets the user’s query, retrieves candidate content from web indexes, breaks pages into extractable chunks, scores those chunks for relevance, clarity, and trust, and synthesizes a response. Your content competes at the chunk level, not the page level.

This distinction matters enormously for how you write. In traditional SEO, you optimize a page for a keyword. In AEO, you optimize individual sections so they are independently extractable and citable. A single H2 section is the unit of competition, not the article as a whole.

How RAG-based AI engines select content

When a user submits a query to an AI answer engine, the system does not search for the exact phrase. It decomposes the query into a set of sub-queries, each targeting a different aspect of the original question. This is the query fan-out process that occurs across all generative engines.

Fan-out sub-queries are not fully consistent across repeated runs of the same query, which is why building for semantic category coverage beats optimizing for specific sub-query text. Your content needs to cover the semantic space of a topic, not just the primary keyword.

After retrieval, content is ranked at the chunk level. Chunks are evaluated for three qualities:

- Relevance: Does this chunk address the specific sub-query? Semantic proximity matters more than keyword frequency. Princeton GEO-Bench research confirmed that keyword stuffing performs below the unoptimized baseline in LLM engines, while adding quantified statistics improved citation rates by up to 41%.

- Clarity: Is the answer directly stated, or buried in context? AI systems reward content that leads with the answer, follows with evidence, and does not require reading surrounding sections to make sense.

- Trust: Does the content contain signals that AI systems associate with authority? Named authors, consistent brand entity signals, statistics attributed to verifiable sources, and structured data are all positive trust signals.

How Google AI Overviews selects content

Google AI Overviews operates on a fundamentally different selection model than RAG-based chatbot engines. Rather than retrieving documents at query time through a vector search, AI Overviews draws from Google’s existing search index.

Organic ranking is the primary gateway: BrightEdge’s 16-month study found that AI Overview citation overlap with organic rankings grew from 32% to 54% between May 2024 and September 2025, with YMYL verticals like healthcare reaching 68–75% overlap. This means traditional SEO eligibility (crawlability, indexation, ranking signals, E-E-A-T) is the primary prerequisite for AI Overview inclusion.

Once a page is eligible through ranking, Google selects specific passages based on how well they match the informational intent of the query. This is functionally similar to featured snippet selection: Google identifies passages that directly answer the question, are concisely stated, and are supported by authoritative page-level signals.

The practical implication is that AI Overview optimization is largely featured snippet optimization applied systematically. Answer-first paragraph structure, FAQPage schema, and clear question-to-answer heading patterns all improve passage-level selection within an already-ranking page.

The key distinction from RAG: seoClarity’s analysis of the top 1,000 URLs ChatGPT cited in the US found that 25% have zero organic visibility in Google. This is a figure that would be essentially impossible for AI Overviews, where organic ranking is table stakes.

Among ChatGPT’s top 3 most-cited URLs, that share rises to 50%. With chatbot engines, content can earn citation through the LLM’s training data or real-time web access entirely independent of Google rankings.

This is why AEO strategy requires both tracks: maintaining the SEO foundation that makes pages eligible for AI Overviews, while also building the semantic depth and entity authority that earns citation in chatbot engines.

The chunk as the unit of optimization

A content chunk typically consists of an H2 or H3 section, the content it introduces, and any structured elements (tables, lists, definitions) within it. For your content to be citation-eligible, each chunk must be independently answerable: someone reading only that section should get a complete, useful response.

This is the core structural requirement of AEO. It informs everything from how you write section openers to how you handle definitions to why you cannot use pronouns referencing earlier sections if you want AI engines to extract a later section in isolation.

For the technical infrastructure behind AI-friendly content structure, see the technical GEO guide.

How do AEO, SEO, and GEO relate to each other?

AEO, SEO, and GEO target different output types:

- SEO = ranked links.

- AEO = brand mentions inside AI answer boxes (AI Overviews, PAA, voice).

- GEO = citations within longform generative responses.

All three require search indexability, none replaces the others.

The table below is the clearest way I have found to explain this distinction to teams trying to allocate budget and effort across all three.

| Dimension | SEO | AEO | GEO |

|---|---|---|---|

| Target output | Ranked link on SERP | Brand mention in AI answer boxes | Cited source in long-form generative response |

| Primary platform | Google, Bing (organic) | Google AI Overviews, AI Mode, ChatGPT, Perplexity, Gemini, Copilot, voice assistants | ChatGPT, Perplexity, Gemini, Copilot, Google AI Mode |

| Optimization unit | Page/keyword | Brand, topic, and answer block/chunk | Domain + entity ecosystem |

| Primary metric | Organic rank + CTR | Featured snippet appearances, zero-click impressions | Brand visibility score, citation share, and domain influence |

| Content requirement | Keyword relevance + E-E-A-T | BLUF structure + Q&A format + FAQPage schema | Authority signals + entity clarity + semantic depth |

| Failure mode | Algorithm update, link attrition | Content not extractable as a snippet | Brand entity gaps, thin topical coverage |

AEO is the practical bridge between SEO fundamentals and full GEO strategy.

If you have never run structured data, optimized for featured snippets, or written direct-answer content, AEO is where you start. GEO extends the scope to the entire generative AI ecosystem, including prompts that never touch a traditional search engine.

How to build an AEO strategy: the FIFI framework

The FIFI framework (Find, Implement, Focus, Increase) is the strategic model that organizes AEO execution into four repeatable pillars.

The five steps below are the implementation detail within those pillars: steps 1 and 2 sit under Find (identify where you are missing and what to build), steps 3 and 5 sit under Implement (structure content for AI extraction), and step 4 sits under Focus (build the authority signals that make content trustworthy enough to cite). The Increase pillar (off-site brand authority and entity signals) is covered with live campaign data in the FIFI in action section that follows.

The steps are cumulative: skipping to step 4 without completing steps 1 through 3 produces minimal impact.

Find, step 1: map the fan-out sub-query space

AI engines do not search for the keyword your content targets. They decompose the query into sub-questions before retrieving content. The FAN methodology provides a practical framework for mapping this space: for any anchor query, you map seven sub-query types that the AI will generate: definition, comparison, how-to, use case, objection, entity expansion, and metric.

Here is how it applies to this topic, with live Similarweb data (February 2026, worldwide):

| Sub-query type | Example | WW Vol | Zero-click | Flag |

|---|---|---|---|---|

| Definition | What is answer engine optimization | 1,461 | 82.6% | Citation play |

| Comparison | AEO vs SEO difference | 9,484 | 82.0% | Citation play |

| How-to | How to implement an AEO strategy | 1,629 | 72.6% | Citation play |

| Use case | AEO for B2B marketing | ~200 | ~70% | Citation play |

| Objection | Is AEO worth the investment | ~300 | ~65% | Mixed |

| Entity expansion | AEO tools software | 2,893 | 84.5% | Citation play |

| Metric | How to measure AEO performance | <100 | 0% | Click opportunity |

Note: AI Overview presence fluctuates monthly, which means zero-click rate is the more stable citation-play indicator.

Two things stand out:

- Every sub-query except the measurement one has a zero-click rate above 60%, meaning they are citation plays, not traffic plays.

- The measurement sub-query is the anomaly at 0% zero-click: that one you want to rank for and drive clicks. Structure your content accordingly.

Find, step 2: audit your content coverage

Once you have the fan-out map, check which sub-queries your existing content addresses. A sub-query is covered if there is a standalone section (H2 or H3) that directly answers it without requiring the reader to have read the surrounding sections first. If no such section exists, that sub-query is a gap.

Coverage gaps are your brief. Each uncovered sub-query type needs either a new section in an existing article, a new article if the volume justifies standalone treatment (300+ searches per month is a reasonable threshold), or an FAQ answer if the volume is low.

Implement, step 3: restructure content as atomic chunks (BLUF)

After auditing hundreds of GEO articles against AI citation patterns, the BLUF (Bottom Line Up Front) requirement is the single highest-leverage structural change: ahead of schema, ahead of link building. If you only do one thing from this framework, make it this.

- BLUF opener: Lead each section with a direct 30 to 60-word answer. This is what AI systems extract. Write it as if it is the only paragraph the reader will see.

- Explicit definitions: Every key concept needs a definition in the form “X is…” or “X is defined as…” Implied definitions are invisible to LLM retrieval.

- No pronoun dependencies: “As discussed above” and “this approach” are extraction killers. Every chunk must use explicit noun references.

- Self-contained statistics: Every quantified claim needs the number, the population, the action, the timeframe, and the source. (“Research shows clicks are declining” is not a citable claim. “Users clicked on traditional search results 8% of the time when an AI summary appeared, compared to 15% without one, among 900 U.S. adults in March 2025 (Pew Research Center, July 2025)” is citable.)

Focus, step 4: add structured data

Structured data does not guarantee citation selection, but it removes ambiguity about what your content contains. The three most impactful schema types for AEO:

- FAQPage schema: Explicitly identifies the Q&A format AI systems look for. The most important schema type for AEO.

- Article schema: Provides machine-readable author, publish date, and organization signals. Freshness matters for rapidly evolving topics like AI search.

- HowTo schema: Identifies step-by-step process content. If you have implementation guides or numbered frameworks, HowTo schema makes the structure machine-readable.

Increase, step 5: build authority signals and topical depth

- Quantified claims with primary sources: Adding statistics improved LLM citation rates by up to 41% according to the Princeton GEO-Bench research mentioned earlier. Every factual claim that can be supported with a number should be.

- Named frameworks and methodologies: AI systems retrieve conceptual structures. If you name a framework (FAN, BLUF, RAG), define each component, and apply it to a concrete example, that section becomes extractable as a teachable concept.

- Author and brand-entity signals: Consistent author attribution, an organizational schema, and references to your brand as an entity strengthen the trust signals that AI systems use.

- Structured comparisons and tables: Tables and structured lists are retrieved preferentially because they are pre-organized for extraction.

FIFI in action: applying the framework to real brand data

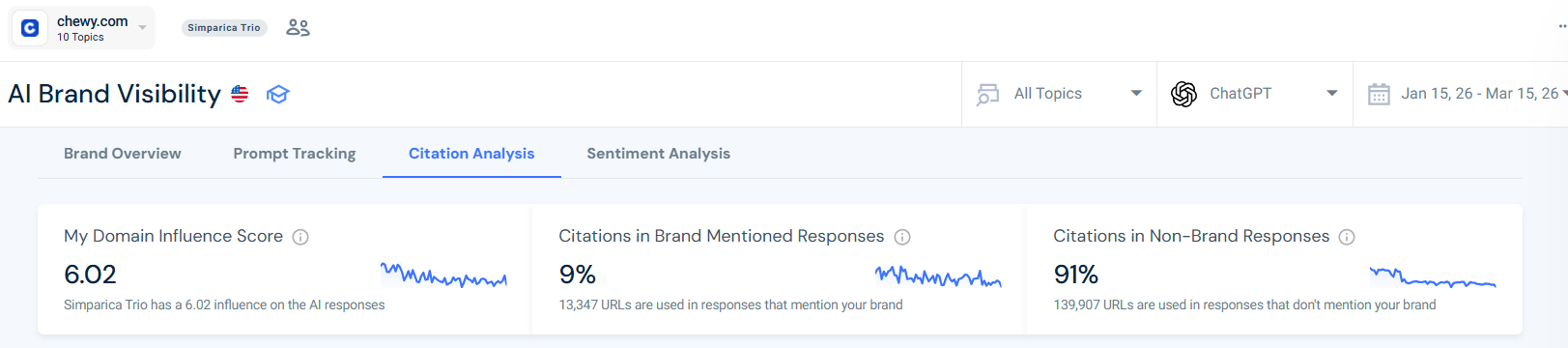

The FIFI framework translates AI Brand Visibility campaign data into a concrete AEO action plan. I applied it to Chewy using Similarweb’s AI Brand Visibility tool across two snapshots (December 2025 and February 2026, ChatGPT model, 100 prompts each) to show how month-over-month data reveals what to prioritize.

FIFI is a four-pillar model designed for the AI search context, where the traditional keyword-to-content action plan breaks down. It answers four sequential questions:

- Find (which prompts am I missing?)

- Implement (is my content structured for extraction?)

- Focus (where should I build topical authority?)

- Increase (am I trusted off-site?).

Each pillar maps directly to data available in Similarweb AI Search Intelligence.

Month-over-month baseline: what changed and what it means

Before applying FIFI, you need two data points. The comparison between periods is often more instructive than a single snapshot.

Here’s what it looks like for Chewy:

| Metric | Dec 13 2025 | Feb 13 2026 | Change |

|---|---|---|---|

| Prompts tracked (ChatGPT) | 100 | 100 | — |

| Prompts mentioning Chewy | 6 (6%) | 7 (7%) | +17% |

| Prompts citing chewy.com URLs | 10 (10%) | 3 (3%) | -70% |

| Topics with Chewy presence | 6 of 10 | 5 of 10 | -1 topic |

| Positive sentiment mentions | 0 | 0 | Unchanged |

| Flea & Tick topic presence | 2 prompts | 0 prompts | Full regression |

The headline finding is not the mention rate, which barely moved (+17%). The headline finding is the citation rate: -70% in two months. Chewy went from being cited in 10 prompts to being cited in 3, while being mentioned in roughly the same number of prompts in both periods.

This illustrates one of the most important distinctions in AI visibility measurement: being mentioned and being cited are not the same thing. A brand can appear in an AI answer as part of a list of options (mention) without having any of its pages used as a source (citation).

Mentions without citations mean the AI knows your brand exists but does not trust your content enough to quote from it.

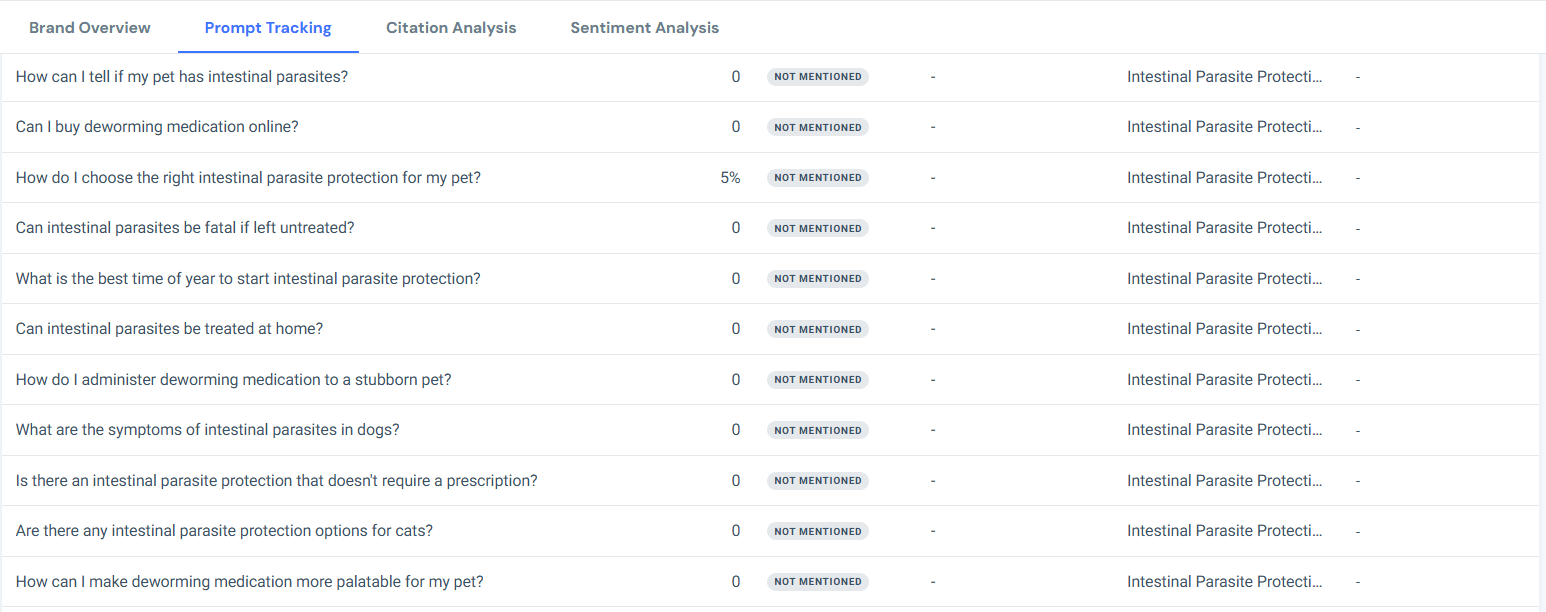

Find: identifying where Chewy is missing

The first FIFI pillar uses the Prompt Analysis tool to identify which prompts trigger Chewy mentions and which do not. The topic-level breakdown from the February 2026 snapshot:

| Topic | Prompts tracked (Dec) | Chewy present (Dec) | Chewy present (Feb) | Change |

|---|---|---|---|---|

| Dog Health | 11 | 2 | 2 | Stable |

| Animal Health | 10 | 1 | 2 | +1 |

| Heartworm Disease | 9 | 1 | 1 | Stable |

| Flea & Tick Prevention | 11 | 1 | 0 | Regression |

| Flea & Tick Protection | 9 | 1 | 0 | Regression |

| Intestinal Worm Protection | 8 | 1 | 0 | Regression |

| Roundworm Protection | 10 | 0 | 1 | +1 |

| Hookworm Protection | 12 | 0 | 1 | +1 |

| Intestinal Parasite Protection | 12 | 0 | 0 | Absent |

| Pet Health | 8 | 0 | 0 | Absent |

The pattern is unambiguous. Chewy’s entire presence in the December snapshot came from transactional and commercial prompts:

- “Can I buy dog health supplements online?”

- “Can I buy heartworm medication online?”

- “What are the most popular flea and tick prevention brands?”

- “What is the most affordable hookworm protection option?”

Every educational or informational prompt returned zero Chewy presence across both periods:

- “What are the long-term effects of heartworm disease?” Not mentioned

- “What are the symptoms of intestinal parasites in dogs?” Not mentioned

- “What is the difference between flea prevention and treatment?” Not mentioned

- “How do I prevent common dog health issues?” Not mentioned

Chewy is a strong answer to “where do I buy”, but is nearly invisible for “what should I know.” In AI search, that means the brand is missing the entire discovery and consideration phase, the part where preferences are formed before anyone searches for a place to buy.

Implement: what the citation data says to build

The second FIFI pillar uses the Citation Analysis tool to understand which URLs are being cited and which are not. Chewy.com URLs that appeared in citations across the two snapshots:

- chewy.com/b/hip-joint-1568 (joint supplements category page)

- chewy.com/b/skin-coat-1570 (skin & coat supplement category page)

- chewy.com/education/dog/flea-and-tick/flea-and-tick-for-puppies (one education guide)

All three are product or category pages. No Chewy editorial content, no how-to guides, no symptom explainers made it into the set of cited URLs. The 70% drop in citations from December to February correlates precisely with the absence of BLUF-structured educational content on chewy.com.

The domains being cited for the educational queries Chewy is missing from tell the rest of the story: petmd.com (5 prompts), akc.org (2 prompts), vet.cornell.edu (2 prompts), thesprucepets.com, and kinship.com. These are editorial and veterinary authority sites.

For any informational query, AI engines are bypassing Chewy’s domain entirely in favor of sources that have built explicitly answer-structured content on those topics.

The citation gap analysis is brief. Every domain appearing in citations for prompts in which Chewy is absent represents a content type that Chewy either lacks or has not structured for AI extraction.

Focus: where to build topical authority

The third FIFI pillar identifies which topic clusters to prioritize.

From the data, Chewy’s authority-building priority list is clear: Flea & Tick (22 combined prompts, zero presence in February) and Intestinal Parasite Protection (12 prompts, zero presence in both periods) are the highest-volume, highest-gap topic clusters. Pet Health (8 prompts, zero presence) is a secondary priority.

Applying the FAN sub-query mapping from Step 1 to these topics gives the content brief: Chewy needs definition-type answers (“What is flea prevention?”), how-to answers (“How do I protect my dog from ticks?”), symptom answers (“What are the signs of intestinal parasites in dogs?”), and comparison answers (“Flea prevention vs flea treatment: what’s the difference?”) for each of these topics.

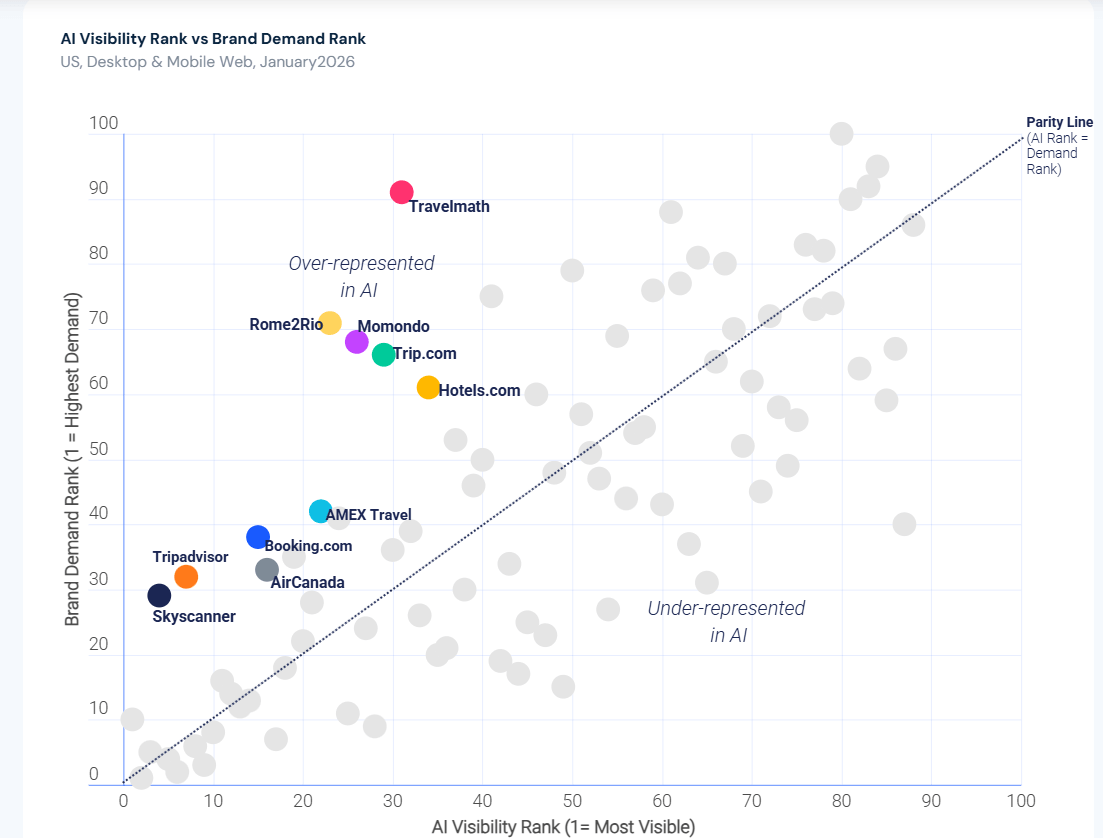

This authority-over-scale pattern is one of the most consistent findings in the 2026 AI Brand Visibility Report. Across all six sectors analyzed, specialist brands with deep, structured content on a specific topic consistently outrank larger competitors in AI visibility relative to their branded search demand.

NerdWallet and Bankrate outperform major banks in Finance. eCosmetics and drmtlgy.com outrank mass-market beauty brands. Travelmath beats Booking.com in Travel. These “overachievers” share one trait: content built around structured explanation, not brand scale.

Topical authority is not a tiebreaker in AI search optimization, it is the primary mechanism.

For Chewy, that means the path to AI visibility in Flea & Tick and Intestinal Parasite Protection runs directly through becoming the most authoritative educational source in those topics, not through having the largest pet e-commerce brand.

Increase: off-site signals and brand presence

The fourth FIFI pillar addresses the trust signals that make AI engines willing to cite your domain in the first place.

Chewy’s off-site signal profile, as revealed by the citation data, is that of a retailer: the brand is trusted for commerce-related queries but not referenced by the veterinary and editorial sources that dominate informational pet health content.

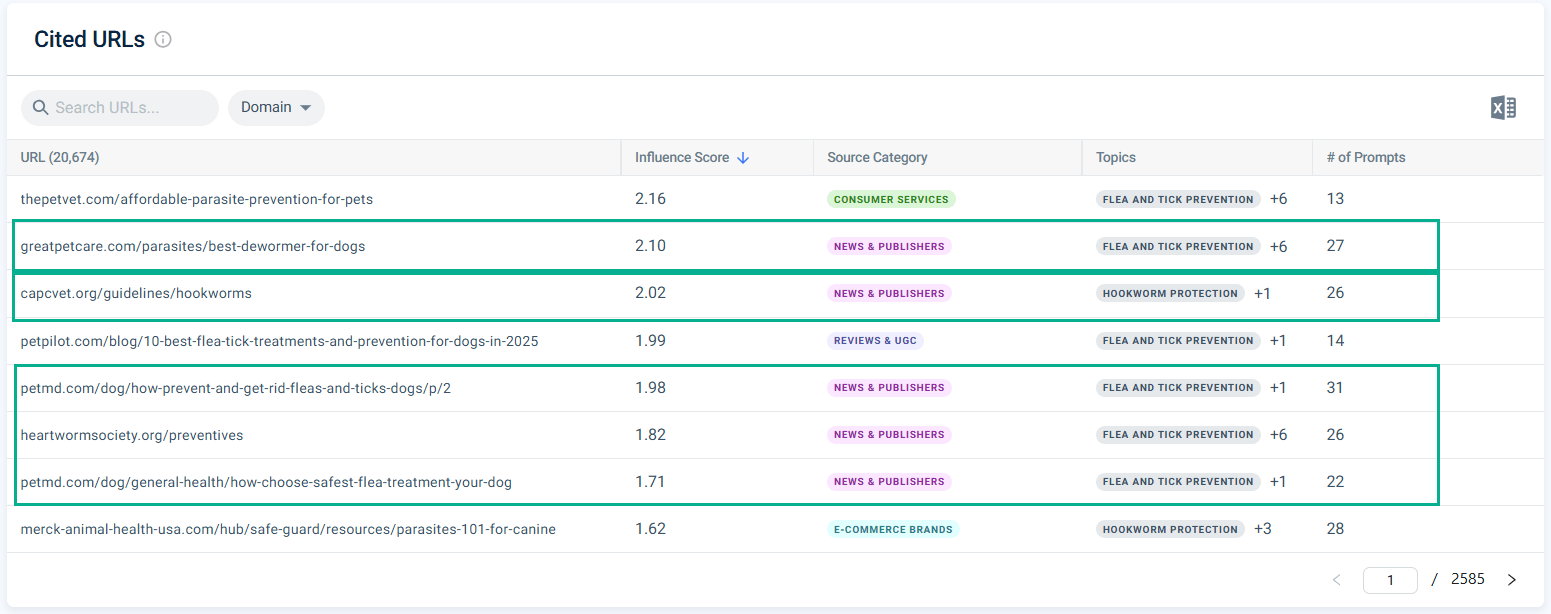

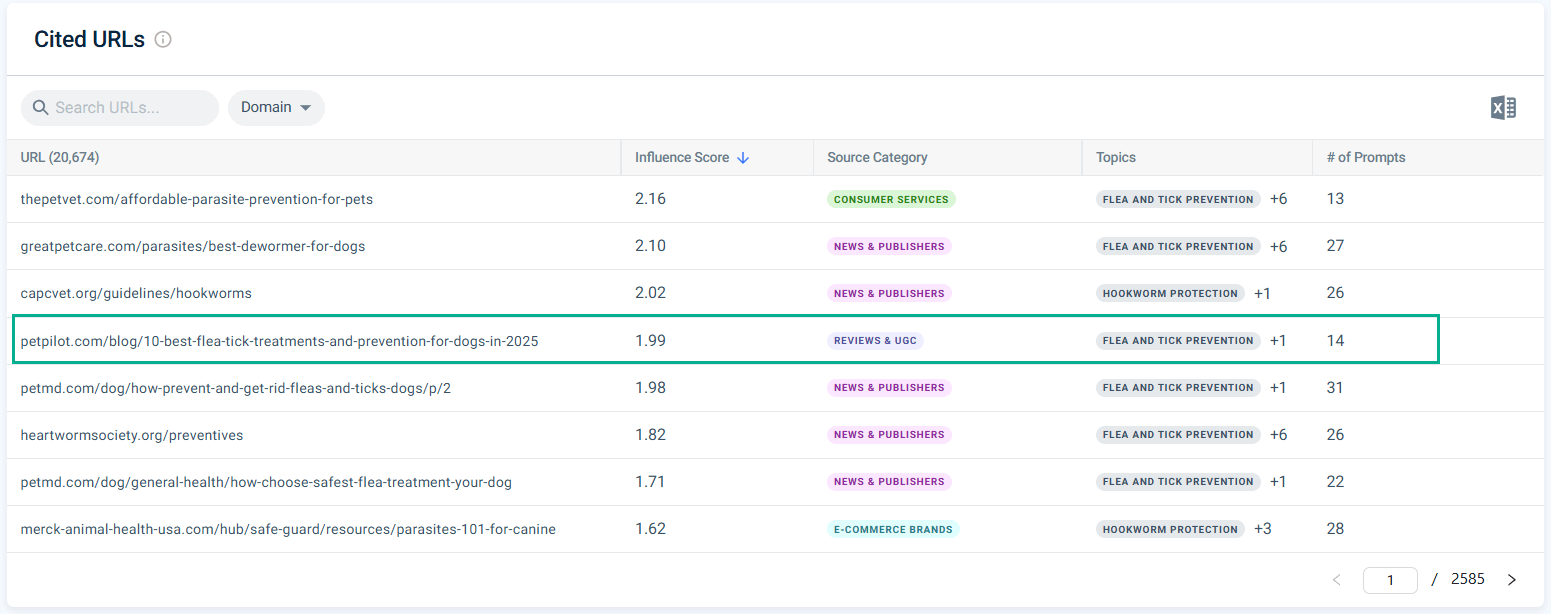

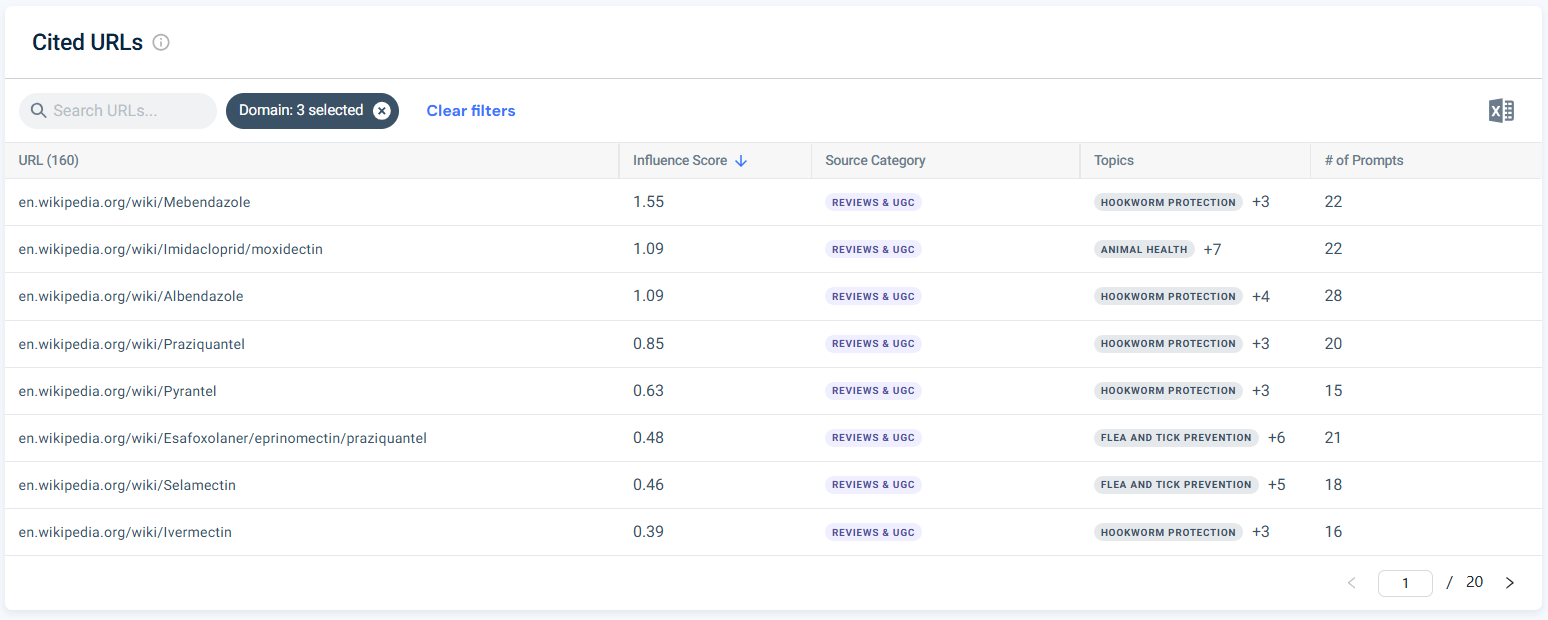

However, the best part about analyzing citation data is not just knowing when your brand isn’t cited. The citation analysis process also shows you which brands and websites are cited.

With this data, you can take a generic priority actions list like below and target the exact sources that get cited by LLMs:

- Digital PR targeting veterinary publications and pet health editorial sites in categories where Chewy is currently absent from citations (parasite protection, flea/tick prevention)

- Customer reviews and UGC content on third-party platforms that AI engines cite as community-sourced authority

- Wikidata and Wikipedia entity maintenance so AI systems have a structured, verifiable fact base for the Chewy brand

- Partnership and content integration with high-trust veterinary domains that currently appear in Chewy’s citation gap

| FIFI pillar | Question it answers | Chewy finding | Action |

|---|---|---|---|

| Find | Which prompts am I missing? | Absent from Flea & Tick and Intestinal Parasite entirely | Build educational content for these topic clusters |

| Implement | Is my content extractable? | Only product/category pages cited, no educational BLUF-structured guides | Add 40-60-word answer blocks to blog posts and build new informational pages |

| Focus | Where should I build authority? | Strong on transactional queries, invisible on informational ones | Prioritize definition, how-to, and symptom sub-queries in parasite and flea/tick categories |

| Increase | Am I trusted off-site? | petmd.com, akc.org, vet.cornell.edu cited instead of chewy.com for educational queries | Digital PR campaign targeting veterinary publications; develop or claim a Wikidata entity |

The FIFI framework is a repeatable diagnostic, not a one-time audit. Run it quarterly. The month-over-month citation collapse (-70%) in the Chewy data was not visible at the mention level. Without tracking both metrics separately, you would have concluded that AI visibility was stable.

How to choose AEO tools for your brand

The right AEO tool for your brand depends on where you are in your optimization maturity. At a minimum, you need citation tracking before anything else. Without a feedback loop, AEO is invisible work. Beyond that, the criteria below separate tools that genuinely support AEO workflows from tools that have bolted an “AI” label onto existing rank tracking.

There are six criteria worth evaluating before committing to any AEO toolset:

- AI engine coverage: The tool should track brand visibility across at least ChatGPT, Perplexity, Google AI Mode, and Gemini. Tools that monitor only one or two engines give you a partial picture that can badly misrepresent your actual share of voice (a brand that looks invisible in ChatGPT may be dominant in Perplexity, and vice versa).

- Citation vs. mention distinction: This is the most common gap in the market. Many tools report “brand mentions” in AI answers without distinguishing between appearing in a list of options (mention) and having your URL cited as a source (citation). As the Chewy data above shows, a brand can gain mentions while simultaneously losing 70% of its citations. A tool that blends the two will tell you everything is stable when it is not.

- Zero-click data at the keyword level: For AEO keyword research, zero-click rate is the primary prioritization signal: It tells you whether a query is a citation play or a traffic play. Most keyword tools do not report this metric at all. Without it, you are building a content strategy on search volume alone, which optimizes for the wrong outcome on high-zero-click queries.

- AI Overview and featured snippet tracking in rank monitoring: Your rank tracker should flag when a tracked keyword triggers an AI Overview and whether your content is included, not just where you rank in the blue links. PAA box tracking matters for the same reason: these SERP features are most directly correlated with AEO citation potential.

- Competitive benchmarking: You need to see which competitor domains are being cited for your target queries, not just whether your own domain appears. Without competitive citation data, you cannot run a citation gap analysis, which means you cannot identify the specific content types and off-site signals you are missing.

- Traffic integration. AI visibility metrics that cannot be connected to actual referral traffic leave you unable to calculate ROI or prioritize which citation improvements matter most commercially. A tool that shows both citation frequency and the visits generated per cited page closes the loop.

Top AEO tools by category

No single tool covers every AEO use case equally. The categories below reflect the four distinct jobs an AEO workflow requires: citation and brand monitoring, keyword research for AI sub-query mapping, SERP feature and AI Overview tracking, and structured data validation.

Best for citation and brand mention tracking

This is the non-negotiable starting point. Before optimizing anything, you need a baseline that distinguishes citations from mentions, covers multiple AI engines, and shows competitive context. Off-site link signals belong here too: the backlinks you earn through digital PR and editorial outreach are part of what makes AI engines willing to cite your domain.

- Similarweb AI Brand Visibility: Tracks brand mention rate, citation share, and share of voice across ChatGPT, Perplexity, Gemini, and Google AI Mode. Distinguishes cited URLs from unattributed mentions. Includes Prompt Analysis (query-level brand presence by topic), Citation Analysis (competitor domains cited with influence scores, includes URL-level data), and Sentiment Analysis (positive/neutral/negative framing with competitive benchmarking).

- Profound: Pure-play AI brand monitoring with mention tracking across some AI engines. Does not distinguish citation from mention, provides no zero-click keyword data, and has no traffic integration, so there is no way to connect AI visibility to downstream commercial impact.

- Brandwatch: Enterprise social and web listening platform with AI-generated content monitoring. Broad coverage across news, forums, and some AI surfaces. Not purpose-built for AEO: no citation vs. mention distinction, no SERP feature data, and no zero-click keyword research capability.

- Semrush (Brand Monitoring): Provides brand mention tracking across the open web and some AI surfaces as part of its broader platform. Does not separate AI citations from general mentions and lacks the prompt-level granularity needed to identify which specific query types trigger or exclude your brand in AI answers.

- Similarweb Backlink Analysis: Tracks and analyzes the inbound links generated by your off-site content and digital PR efforts. Use it to monitor whether your link-building efforts are producing mentions in AI engines from the same sources.

Best for keyword research for AI sub-query mapping

AEO keyword research requires two capabilities that most traditional tools lack: zero-click rates at the individual keyword level and SERP feature data indicating whether a query already triggers a featured snippet or an AI Overview.

- Similarweb SEO Intelligence: Provides zero-click rates per keyword. That is the primary signal for classifying sub-queries as citation plays versus traffic plays, as shown in the FAN table in Step 1. The keyword research tool also surfaces SERP features data for each keyword, including featured snippet presence and AI Overview triggers, so you can identify which queries already have extractable answer boxes that your content could compete for or displace. The only major keyword tool that exposes both zero-click rates and SERP feature data in the same interface.

- Google Search Console: Essential for monitoring zero-click impressions on your own domain. High impressions with low CTR on an informational query is the clearest signal that an AI Overview or featured snippet is absorbing traffic. Does not provide competitor data or zero-click rates for queries you do not already rank for.

- Semrush: Industry-standard keyword research with volume, difficulty, and SERP feature data. Does not show zero-click rates at the individual keyword level, which means you cannot use it to distinguish citation plays from traffic plays without a separate data source.

- Ahrefs: Comprehensive keyword research and SERP data, including featured snippet tracking. Like Semrush, it does not provide zero-click rates per keyword. Strong for mapping search intent and competitive keyword gaps in traditional SEO (a meaningful gap for AEO sub-query prioritization).

- Moz: Keyword research with difficulty scores, SERP analysis, and ranking data. No zero-click rate data. Reliable for traditional keyword research workflows but not adapted for the citation-play vs. traffic-play classification that AEO sub-query mapping requires.

Best for AI Overview and featured snippet tracking

Once content is live, you need to track whether it is winning the SERP features most directly relevant to AEO: AI Overviews, featured snippets, and PAA boxes.

- Similarweb Rank Tracker:

- Monitors tracked keywords for AI Overview appearances, flagging both when an AI Overview is present on a query and whether your content is included.

- Tracks featured snippet wins and losses across content updates, and monitors PAA box presence across your question cluster.

- Closes the loop between structural content changes (BLUF restructuring, schema additions) and the SERP feature outcomes those changes produce.

- SE Ranking:

- Includes AI Overview detection in its rank tracking module.

- A viable lightweight option for teams tracking a focused keyword set on a limited budget.

- Does not provide AI citation tracking or zero-click keyword data.

- Semrush Position Tracking:

- Tracks SERP feature appearances, including featured snippets and some AI Overview detection.

- Strong for large-scale rank monitoring across competitive keyword sets.

- Does not integrate citation tracking or zero-click data, so it measures presence but not the downstream AEO outcome.

- BrightEdge:

- Enterprise rank tracking platform with AI, Overview monitoring, and featured snippet tracking.

- Lacks AI citation tracking and zero-click keyword data.

- Better suited to large enterprise SEO programs that need scale rather than full AEO workflow coverage.

- AccuRanker:

- Fast, accurate rank tracking with SERP feature detection, including featured snippets and some AI Overview monitoring.

- No AI citation tracking layer or zero-click keyword data.

- A solid standalone rank tracker, but not designed for AEO-specific measurement workflows.

Technical SEO and structured data validation

AEO eligibility depends partly on the technical health and structured data implementation of your pages. AI engines cannot cite content they cannot reliably crawl and interpret.

- Similarweb Site Audit: Crawls your site and audits it for technical issues that affect AI citation eligibility: missing or malformed structured data, indexation gaps, crawl errors, and content accessibility problems. Integrates with Similarweb’s keyword and traffic data so you can prioritize fixes by commercial impact, not just issue count.

- Google Rich Results Test: The primary validation tool for structured data. Tests any page or code snippet and previews how rich results will render. Free, authoritative, and the correct final check before publishing any schema changes.

- Schema.org validator: Validates markup against the full Schema.org specification. Useful for checking complex nested schemas (e.g., Organization + WebPage + FAQPage combinations) that the Rich Results Test does not fully surface.

- Screaming Frog SEO Spider: Crawls your site and flags pages where structured data is missing, malformed, or inconsistent with page content. Widely used for technical SEO audits at scale. Desktop-based with no integration into keyword, ranking, or citation data.

What is the best all-round AEO tool?

For teams that want a single platform covering the complete AEO workflow, from sub-query mapping to citation tracking to SERP feature monitoring to traffic attribution, Similarweb’s AI Search Intelligence suite is the only option that integrates all four jobs.

The suite brings together what no other tool currently combines in one place:

- Keyword research with zero-click rates and SERP feature data.

- Rank tracking with AI Overview and featured snippet monitoring.

- Brand visibility across four major AI engines with the citation vs. mention distinction.

- Competitive citation benchmarking through Citation Analysis.

- Prompt-level brand tracking through Prompt Analysis.

- Sentiment tracking, actual AI referral traffic by landing page through the AI Traffic Tracker.

- Backlink monitoring through Backlink Analysis.

- Full technical SEO auditing through Site Audit.

The practical consequence is that every step in this guide (building the FAN sub-query table, running the FIFI framework, and setting the KPI measurement baseline) can be executed end-to-end without switching platforms.

That is not a minor convenience. Every tool switch in a workflow introduces latency, attribution gaps, and inconsistencies in how the same query is classified across systems.

Other tools address individual parts of the AEO problem. Similarweb addresses the full loop: research, publish, track, attribute, repeat.

How to measure AEO performance

AEO performance is measured through five KPIs: AI citation frequency, brand mention rate, share of voice across AI engines, fan-out coverage score, and zero-click rate trend. These differ fundamentally from traditional SEO rank-and-click metrics.

Here is the thing most teams miss: the measurement sub-query (“how to measure AEO”) is the only one in the entire AEO keyword cluster with a 0% zero-click rate. That is not a coincidence.

When people want to understand a concept, they accept AI answers. When they need to implement something, they click through to find the real answer. Measurement is operational, meaning this section drives actual traffic to this article.

The 5 AEO KPIs

| KPI | What it measures | Tool | Target |

|---|---|---|---|

| AI Overview appearances | Tracked keywords triggering an AI Overview citing your content | Similarweb Rank Tracker | Grow month over month |

| Featured snippet count | Keywords where your content wins the featured snippet | Similarweb Rank Tracker | Track wins/losses after content updates |

| PAA box presence | Keywords where your content appears in People Also Ask | Similarweb Rank Tracker | Expand coverage across question cluster |

| Zero-click impressions | Impressions from queries where users see your brand without clicking | Google Search Console (high impressions, low CTR) | Monitor brand recall signal |

| Fan-out coverage score | % of 7 question types with indexed, answer-structured content | Manual content audit | 7/7 sub-query types covered |

Setting a baseline

Before you can measure progress, you need a citation baseline. The process has three steps.

- Define your query set: the 10 to 20 prompts that represent your audience’s actual questions in the topic area. These should be anchor queries (15-25 words, conversational phrasing) rather than seed keywords. The sub-query types from your fan-out map provide the structure.

- Run your AI Brand Visibility campaign against those queries and record the starting state: which prompts cite you, which cite competitors, which cite neither.

- Set your 90-day target. A realistic initial target for a topic where you already have indexed, authoritative content is moving from 0 citations to top-3 cited sources on at least 3 of your 7 sub-query types within 90 days of publishing BLUF-structured updates.

For the full ROI model, including how to connect AI visibility to the downstream pipeline, see the AI visibility ROI measurement guide.

To set up ongoing tracking, see how to track AI visibility with Similarweb.

The AI visibility race has already started

Here is the summary of AEO in one sentence: structure your content so AI systems can extract the answer, not just find the page.

The structural change in how information is consumed is not a traffic dip. Zero-click rates above 75% on informational topics, AI Overviews triggering in 18% of Google searches, users ending sessions after reading an AI summary without clicking anywhere: this is a permanent behavioral recalibration.

And the Chewy data above shows that even brands with a strong general AI presence can experience a 70% decline in citations in two months without any changes to their own content, simply because the competitive citation landscape shifted.

The practical implication is straightforward. Every section of your content should open with a standalone direct answer. Every key concept needs an explicit definition. Every quantified claim needs a source, a timeframe, and a population. Every H2 should be independently citable by an AI system that has never read the rest of your article.

None of this is fundamentally new as a content practice. What is new is that these standards are now the entry price for visibility, not a nice-to-have. The brands establishing citation authority now, while the methodology is still being worked out and most teams are still focused on rank position, will be the ones AI engines pull from two years from now.

Track your AI Overview, featured snippet, and PAA appearances across your question cluster with Similarweb Rank Tracker. That SERP features baseline is where the AEO measurement starts. When you are ready to extend into the generative answer layer, bring in Similarweb AI Brand Visibility to track brand citations, share of voice, and sentiment across ChatGPT, Perplexity, Gemini, and Google AI Mode.

FAQ

What is answer engine optimization?

Answer engine optimization (AEO) is the practice of structuring web content so AI-powered systems can extract it and deliver it as a direct response to a user query, with your brand named as the source. AEO differs from traditional SEO in its unit of optimization: where SEO targets a full page for a keyword, AEO targets individual content sections that must stand alone as citable answers.

AEO complements SEO rather than replacing it. Strong organic authority is the prerequisite, while AEO is the structural layer that determines whether eligible content gets selected as the answer.

What is the difference between AEO and SEO?

SEO (Search Engine Optimization) is defined as the practice of optimizing web pages to rank in traditional search engine results and earn clicks. AEO (Answer Engine Optimization) is defined as the practice of structuring content so AI-powered systems extract it and cite it as a direct answer, whether or not the user clicks through.

The key structural difference: SEO optimizes at the page and keyword leve, and; AEO optimizes at the section level, requiring each content chunk to be independently answerable without surrounding context. Both require E-E-A-T signals and technical crawlability.

AEO adds three requirements SEO does not: BLUF-structured openings, explicit entity definitions, and schema markup (FAQPage, HowTo, Article) that signals answer format to AI extraction systems.

What is an answer engine?

An answer engine is any AI-powered system that delivers a direct synthesized response to a user query instead of a list of ranked links. Answer engines retrieve content from multiple sources, extract relevant passages, and synthesize a single cohesive response, citing some sources explicitly.

Current answer engines include Google AI Overviews, Google AI Mode, ChatGPT (with web search enabled), Perplexity, Microsoft Copilot, Gemini, and voice assistants including Siri and Google Assistant.

Answer engines differ from traditional search engines in that they do not require the user to evaluate and click individual results: the answer is constructed and delivered in one response, often without a follow-up click.

How does AEO work with Google AI Overviews?

Google AI Overviews select content using Google’s existing search index, not real-time retrieval. A page must already rank organically for the query or related queries before Google will consider it as a source. BrightEdge’s 16-month study found that AI Overview citation overlap with organic rankings grew from 32% to 54% between May 2024 and September 2025. Once a page is index-eligible, Google selects passages that directly answer the query in 40–80 words, are concisely stated, and are supported by authoritative page-level signals.

To improve AI Overview citation: structure each section with a BLUF opener, implement FAQPage or HowTo schema, and ensure the page already ranks for the target query or closely related queries.

What tools do I use for AEO?

AEO tooling covers four distinct jobs: citation and brand-mention tracking, keyword research adapted for AI sub-query mapping, AI Overview and featured snippet tracking, and structured data validation. The most critical category is citation tracking, because it provides the feedback loop that makes AEO measurable. Without it, you cannot tell whether content changes are producing citation gains or losses.

Similarweb AI Brand Visibility covers citation tracking across ChatGPT, Perplexity, Gemini, and Google AI Mode, distinguishing cited URLs from unattributed mentions. Similarweb SEO Intelligence provides zero-click rates per keyword, which is the primary signal for classifying sub-queries as citation plays versus traffic plays.

Google Rich Results Test validates structured data before publishing. Google Search Console tracks zero-click impressions on your own domain.

Is AEO the same as GEO?

AEO (Answer Engine Optimization) and GEO (Generative Engine Optimization) are related but distinct disciplines. AEO is defined as optimizing content to be extracted and cited as a direct answer to a specific question, across both SERP-level features (AI Overviews, PAA, featured snippets) and AI chat platforms. GEO is defined as optimizing your entire content and entity ecosystem to maximize brand share of voice, narrative control, and domain influence across the full generative AI landscape, including prompts that never touch a traditional search engine.

AEO is answer-level optimization; GEO is brand-level optimization. AEO is the practical entry point into GEO strategy.

How long does AEO take to show results?

For websites with existing indexed content and baseline domain authority, BLUF structural updates and schema additions can produce measurable AI citation improvements within 30 to 60 days of Google re-crawling the updated pages. New content targeting uncovered sub-query types takes longer: building topical authority in a new area typically requires 60 to 120 days from publication before citation rates stabilize.

Voice assistant citation tends to update more slowly than AI Overview inclusion. The timeline depends on three variables: domain authority in the target topic, competitiveness of the query cluster, and how frequently the AI engine refreshes its citation pool for that query.

Tracking AI Overview appearances in Similarweb Rank Tracker provides the earliest available signal of whether structural changes are producing citation gains.

How do I measure AEO success?

AEO success is measured across three layers.

- Layer 1: SERP-level answer features: featured snippet count, People Also Ask box appearances, and AI Overview inclusion for tracked queries, measured in Similarweb Rank Tracker.

- Layer 2: AI citation and brand visibility: citation frequency, brand mention rate, and share of voice across ChatGPT, Perplexity, Gemini, and Google AI Mode, measured in Similarweb AI Brand Visibility.

- Layer 3: downstream traffic and conversions: AI referral visits by landing page and associated conversions, measured via the AI Traffic Tracker.

Zero-click impressions in Google Search Console (high impressions, low CTR on informational queries) serve as an early proxy for AI Overview absorption.

Wondering what Similarweb can do for your business?

Give it a try or talk to our insights team — don’t worry, it’s free!