What Is AI Visibility?

More than 8.6 billion times in February 2026, people visited generative AI platforms instead of typing into a search box.

I did this by creating a Custom Industry in Similarweb’s market research tool, using the following domains:

This, of course, completely ignores Google’s AI Overviews and AI Mode, but unfortunately, they don’t operate on separate domains. However, we can assume they have widespread usage (as we see all our organic traffic is gone, haha), and that the numbers are much higher than I estimate here.

Our data also shows that the number grew 76% year over year. Most brands have no idea whether they appear in those answers, and no system is in place to find out. That gap has a name: AI visibility.

What still surprises me is how many experienced SEO teams treat AI visibility as a future problem. It isn’t. The shortlist forming in ChatGPT or other chatbots right now is shaping purchase decisions before a single search is ever made. If your brand isn’t in those answers, you’re not ranking lower, you’re absent from the conversation entirely.

This guide defines AI visibility, explains how it differs from traditional SEO, and shows how to measure and improve your brand’s presence across ChatGPT, Perplexity, Google AI Overviews, and other AI chatbots. I’ll also cover adapting your budget for AI search optimization, because this is no longer an experiment you run with spare capacity, it’s a core investment decision.

What is AI visibility?

AI visibility is defined as a marketing metric that measures how often and how accurately a brand is mentioned, cited, or recommended in AI-generated answers across AI platforms. Unlike a search ranking, AI visibility is binary: your brand either appears in the answer or it does not. There is no position seven.

The dimensions of AI visibility

AI visibility is defined as the degree to which an AI engine recognizes, retrieves, and surfaces a brand when generating an answer to a user’s query. It has four measurable dimensions:

Platform breadth – the range of AI platforms on which your brand appears. A brand that appears consistently in ChatGPT responses but is absent from Perplexity and Google AI Overviews has narrow platform breadth.

Query depth – how many relevant prompts in your category trigger your brand’s appearance. A brand may appear in 80% of “best CRM for small business” responses but 0% of “CRM for enterprise teams” responses.

Citation quality – whether AI engines reference your content as a primary source (with a link) or mention your brand abstractly without attribution. Citations carry more weight than unlinked mentions for both traffic and authority signals.

Sentiment – the tone in which your brand is described when it appears. A brand mentioned alongside phrases like “reportedly struggles with” or “some users have found” is visible, but not in a way that builds trust.

Related terms you’ll encounter, AI mindshare, LLM share of voice, and generative engine presence, all point at the same underlying concept. AI share of voice typically refers specifically to the competitive share metric: how your brand’s mention frequency compares to competitors for a given topic.

The distinction between AI brand mentions and citations matters here. A mention is when your brand name appears in the AI’s response. A citation is when the AI links to your content as a source. Both are components of AI visibility, but they operate differently: mentions drive awareness and shape buyer perception, while citations can drive referral traffic and signal trust to the AI engine itself.

How AI answers are actually built

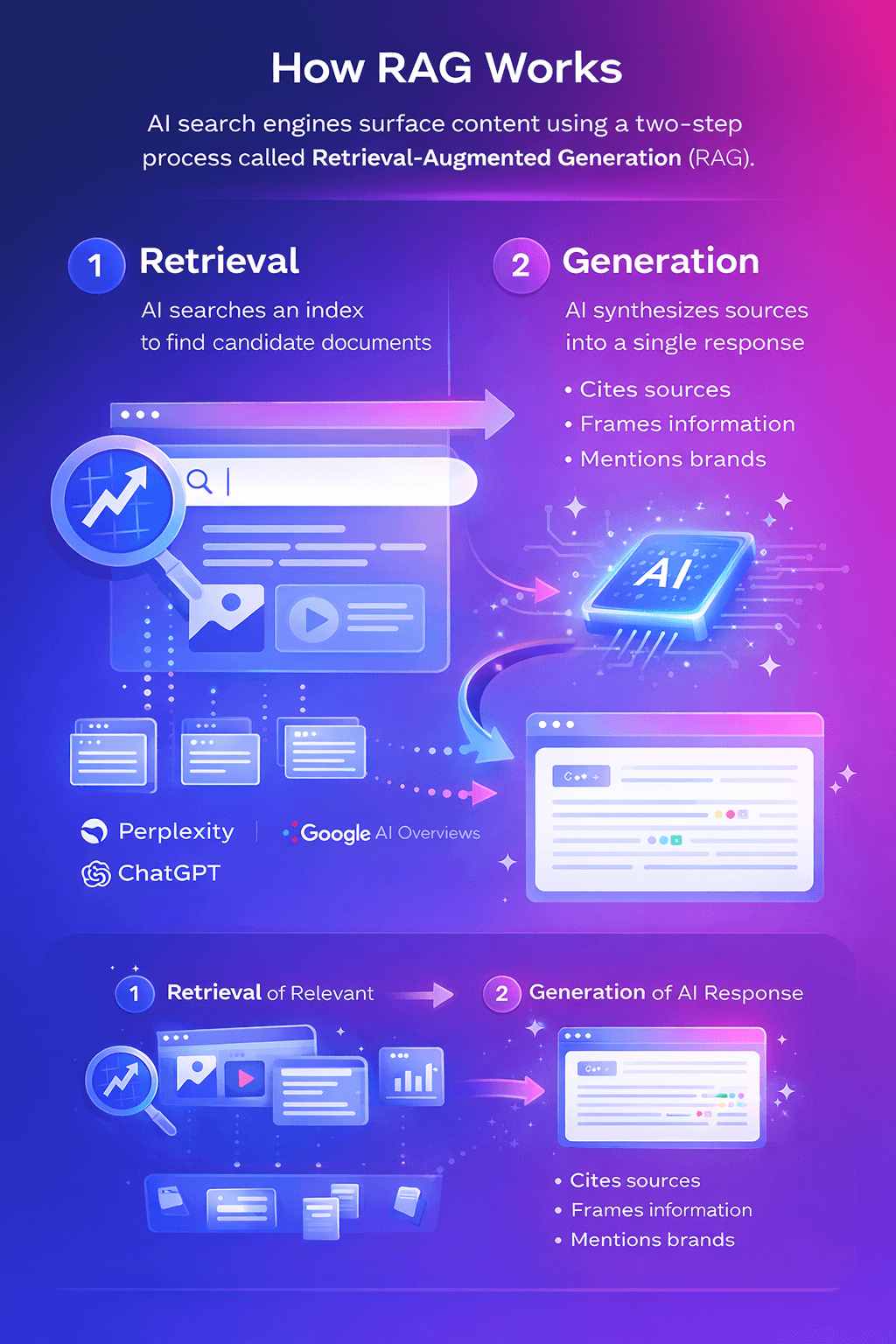

Before optimizing for AI visibility, it helps to understand why AI engines surface some brands and not others. Most modern AI search tools run on a two-step process called Retrieval-Augmented Generation (RAG).

First, retrieval: when a user submits a query, the AI system searches an index to find candidate documents. Perplexity crawls the live web. Google AI Overviews draws from Google’s search index. ChatGPT blends training data with real-time search when browsing is enabled. Each platform has different data sources, different retrieval biases, and different recency windows.

Second, generation: a large language model synthesizes those retrieved documents into a single response. This is where the AI decides which sources to cite, how to frame them, and which brands to mention by name.

And why not, I let ChatGPT create a visualization of this process. What do you think?

This is where generative engine optimization (GEO) comes in, the discipline of structuring your content and building your authority footprint so that AI systems select, cite, and surface your brand in their responses.

One thing I think most people underestimate: AI engines do not search for your primary keyword. They decompose your query into fan-out queries, a set of parallel sub-queries that each address a different aspect of the user’s intent. A user asking “what’s the best project management tool for remote teams?” might trigger sub-queries about collaboration features, pricing, integrations, user reviews, and use-case comparisons simultaneously. If your content covers the primary question but not those sub-queries, you’ll be cited selectively, and competitors who cover more of the query space will appear more consistently.

This is why optimizing content for LLMs requires a different approach to content design than traditional optimization. It’s not about keyword density. It’s about atomic, independently answerable sections that AI can retrieve and cite without needing the surrounding context.

AI visibility vs traditional SEO: what changes and what stays the same

AI visibility and traditional SEO measure different things. SEO tracks where your web pages rank in search results. AI visibility tracks whether AI engines include your brand in a synthesized answer, there is no ranked list, and there are no positions. A brand can rank first in Google and be invisible in AI answers for the same query.

I think this is where most people initially get it wrong. They assume AI visibility is just SEO with a new name. It isn’t. The mechanics of success are different, the metrics are different, and, most importantly, the competitive dynamics are different.

The core difference: ranking model vs citation model

The differences become clear once you put the two side by side. When comparing SEO and GEO, the shift from ranking to citation is what changes everything in practice:

| Dimension | Traditional SEO | AI visibility |

| Primary metric | Search ranking position (1–10) | Citation presence (yes/no per prompt) |

| User action | Click through to a website | Read the synthesized answer in-platform |

| Content format | Optimized web page competing for a position | Source cited within a single AI-generated response |

| Success signal | Higher rank = more clicks | More citations = more brand exposure at the decision stage |

| Competitive frame | 10 positions on page one | Typically, 2–5 citations per answer |

| Update cycle | Algorithm updates (periodic) | Model retraining + real-time search (continuous) |

| Traffic impact | Direct website visits | Brand awareness, pre-qualified downstream traffic |

| Measurement | Rank trackers, Search Console, analytics | AI visibility platforms, prompt-level monitoring |

That last row matters more than it seems. Your existing analytics stack is largely blind to AI visibility. Search Console bundles AI Overview impressions into organic data without separating them. There is no native dashboard for ChatGPT or Perplexity referral behaviour at the brand-mention level.

Why strong SEO doesn’t guarantee AI visibility

In practice, traditional search authority and AI citation frequency correlate weakly. AI engines favor content that is easy to extract, explicitly structured, and corroborated by third-party sources, not necessarily content from the domains with the highest domain authority.

This has a practical implication for teams optimizing for Google’s AI Mode specifically: AI Mode is its own surface, with its own retrieval logic. A page that ranks in Google’s traditional results is eligible for AI Mode consideration, but eligibility and selection are different things.

SEO is still the foundation, but it needs to be extended

None of this means SEO is irrelevant. AI engines consistently favor content that already performs well in traditional search, organic authority is the entry requirement, not the winning condition. Put simply: if your pages can’t be found and indexed, they can’t be retrieved. SEO gets you into the pool, GEO and AEO determine whether you get cited.

The teams doing this well are treating AI for SEO as a workflow expansion, not a replacement. They’re still building authority and doing keyword research, but they’re also revising how they structure content and which signals they optimize for. Part of that change involves new practices, such as GEO keyword research, where zero-click rate, query intent, and sub-query coverage matter as much as traditional volume and difficulty.

Why AI visibility matters: the buyer journey has moved upstream

AI visibility matters because generative AI platforms are now a primary discovery channel at the earliest stage of the buyer journey. According to Similarweb’s 2026 Generative AI Brand Visibility Index, 35% of US consumers use AI at the product discovery stage, compared to 13.6% who use traditional search. The shortlist is being set before the search begins.

At first, I thought the traffic argument was overstated. Then I looked at what that 35% figure actually represents: not experimental users, not early adopters, but general consumers, making real purchase decisions, using AI as their first filter. That changes how I think about where brand awareness needs to live.

The consumer journey has shifted upstream

AI is changing the consumer journey in a fundamental way, not just a behavioral one. When a consumer asks ChatGPT to recommend an accounting tool for a small business, and ChatGPT names three options, those three brands have captured the moment of intent formation. The brands that weren’t mentioned don’t get a second chance through better meta descriptions or a higher organic rank.

Gen AI referral traffic to transactional sites grew 357% year over year, according to Similarweb’s 2026 data, and those visitors convert at roughly 7%, higher than most traditional organic channels. The reason is straightforward: a user who arrives from an AI recommendation has already been pre-qualified. The AI told them you were relevant. They’re not browsing. They’re evaluating.

For teams building the business case for this investment, understanding the ROI of AI visibility lays out a valuation model that accounts for this pre-qualification effect.

The brand mention is the new conversion event

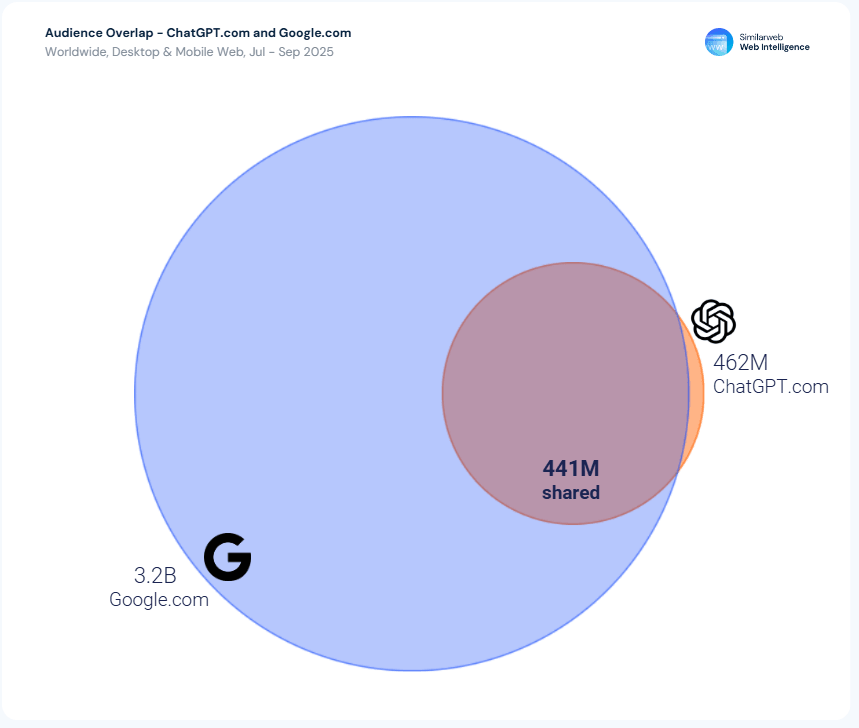

In a zero-click environment, the AI answer is often the only touchpoint. Similarweb data from the 2025 Generative AI Landscape report shows that the vast majority of ChatGPT users also use Google, AI isn’t replacing search, it’s layering on top of it. But in that layer, what matters is the mention, not the click. Being named in the answer creates awareness and credibility that carries forward into every subsequent interaction with your brand.

On getting traffic from AI: the traffic it does send is lower volume than organic, but the intent is higher. It’s worth building for, even while recognizing that the primary value of AI visibility sits upstream of the click.

Being absent from AI answers gets more expensive over time

AI recommendations are functionally zero-sum in the short response window. When ChatGPT recommends three tools, the other forty in the category get nothing. Worse: once a brand becomes established as a cited source, AI engines tend to reinforce that selection. Citation history is itself a signal. The brands building AI visibility now are compounding an advantage that will be progressively harder to close.

If you’re still weighing whether to invest in AI visibility, the answer is clear: yes.

How to measure AI visibility: the four metrics that matter

Here’s where I think most teams start wrong: they pick one metric, usually “does our brand appear in ChatGPT when I search for our category”, and treat that as a comprehensive measurement. It isn’t. A brand can have a high mention rate and a low citation rate (visible but not trusted as a source). A brand can have strong citations and negative sentiment (referenced, but unfavorably). You need all four to understand where you actually stand.

The four core AI visibility KPIs

Brand visibility % – The percentage of tracked AI responses, across a defined prompt set, in which your brand appears.

Brand mention share – Your share of all brand mentions within those AI answers, relative to competitors named in the same responses.

Citation influence score – A measure of how authoritatively your content is cited. An AI engine that links to your homepage differs from one that cites a specific research page or deep-content resource. The distinction matters for both traffic and for the authority signal you’re building with the AI engine.

Sentiment – When analyzing AI sentiment, the framing matters as much as the frequency. A brand mentioned in 60% of responses is losing ground if the majority of those mentions carry phrases like “mixed reviews” or “pricing concerns.” Sentiment tracks whether visibility is working for you or quietly working against you.

How to track AI visibility manually

The simplest approach is to build a prompt list. Identify 15–20 questions you assume your buyers actually ask AI platforms about your category. Run each through ChatGPT, Perplexity, Google AI Mode, etc. Log the results weekly: Does your brand appear? Is the mention linked or unlinked? What’s the framing?

This is how I approach building a prompt list: start with the evaluation-stage questions (“what’s the best [category] for [use case]?”), then the comparison questions (“how does [your brand] compare to [generic category]?”), then the definitional questions (“what is [your category]?”). The first group is where purchase decisions form; the third is where your brand gets defined for users who’ve never heard of you.

Prompt tracking helps systematize how you log and compare results across platforms and over time. Separately, track brand mentions in AI to monitor when your brand name appears with or without a citation link, that distinction is easy to miss when reviewing responses manually.

How to measure AI visibility with Similarweb

At scale, manual tracking breaks down quickly. The prompt surface is too large, the platforms are too many, and the variance across individual runs is too high to draw reliable conclusions from a handful of manual checks.

Similarweb’s AI Search Intelligence gives marketers four tools to measure AI visibility: AI Brand Visibility, Prompt Analysis, Citation Analysis, and Sentiment Analysis. Together they answer the four questions that matter most: Are you visible, who sees you, what do they see, and does it drive results.

AI brand visibility

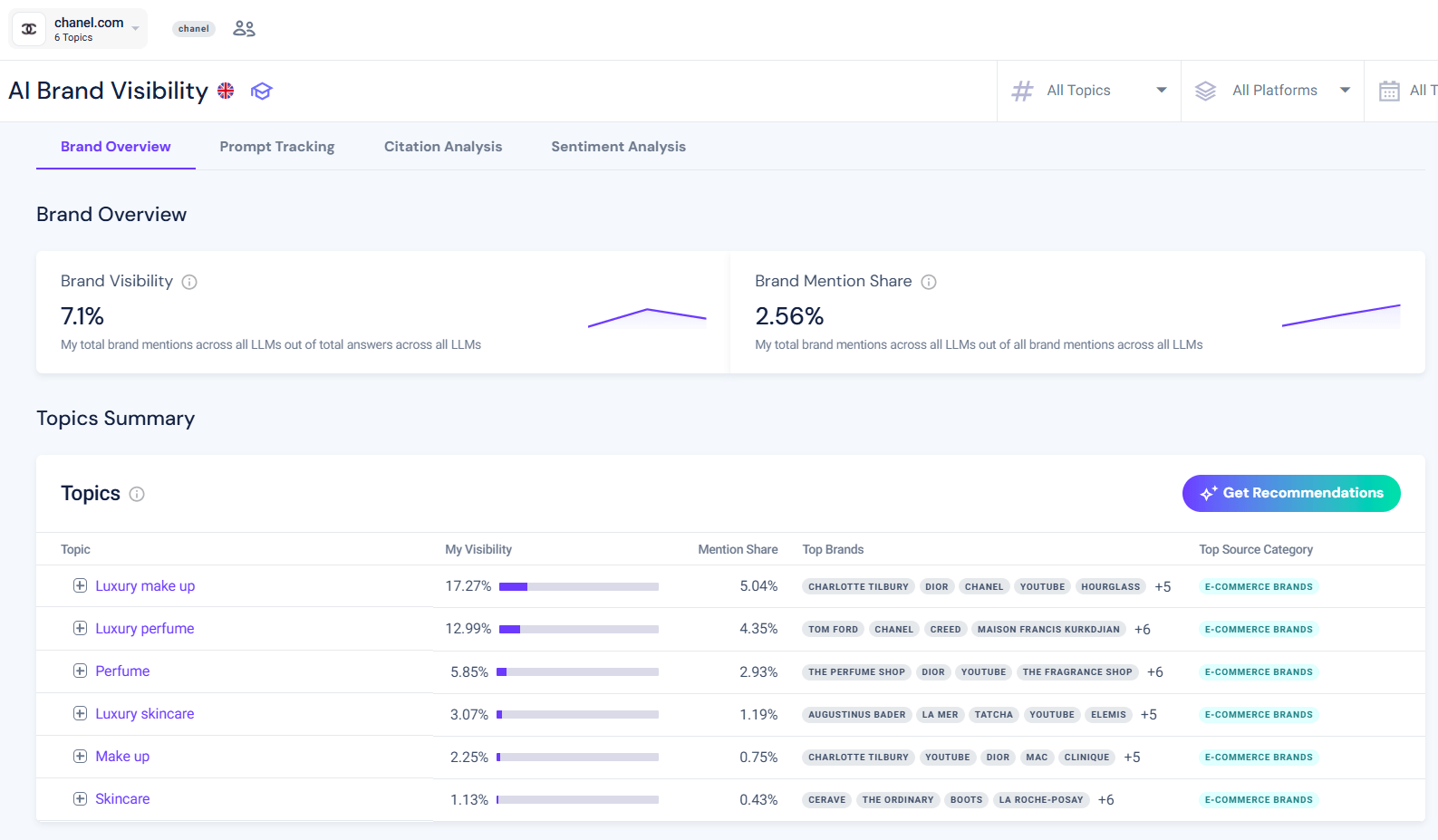

Similarweb’s AI Brand Visibility tracks your brand visibility % and mention share across a configured set of topics and competitors, updated continuously across ChatGPT, Gemini, Perplexity, and AI Mode.

In the example below, we can see that the AI visibility campaign I set up for Chanel has an overall AI visibility of 7.1%, meaning it appears in a relatively small share of AI-generated responses, with a 2.56% mention share compared to competitors. Visibility is strongest in high-intent categories like Luxury makeup (17.27%) and Luxury perfume (12.99%), where the brand appears more frequently in AI answers. However, its mention share in these same categories remains modest, suggesting competitors like Dior and Charlotte Tilbury are still referenced more often. Lower visibility across broader topics like Makeup and Skincare highlights an opportunity to expand presence beyond niche luxury segments and increase overall share of voice in AI-driven discovery.

Prompt Analysis

The AI Prompt Analysis tool shows the actual questions users ask inside AI chatbots for any topic, with the AI’s latest response, whether your brand appeared, and whether that mention was positive, neutral, or negative. The first time I ran a Prompt Analysis campaign, I expected to see a handful of brand mentions. What I found was more useful: a map of exactly which questions my audience was asking AI, which competitors were showing up for each one, and which sources AI was treating as authoritative. That changed how I think about content planning.

Let’s see how Chanel stacks up.

Here we see how AI Prompt Analysis surfaces where you’re already winning, and where competitors are owning the conversation. For high-intent queries like bold luxury fragrances, the brand achieves full visibility (100%) and appears in a positive context. But even within those wins, competitor brands like Tom Ford and Creed are still prominently recommended, with Chanel appearing as one of several options rather than the default choice.

This highlights an important nuance: showing up isn’t the same as owning the answer. You can see exactly how AI positions your brand, which competitors are co-mentioned, and how responses are framed, giving you clear direction on how to refine content to become the primary recommendation, not just part of the list.

But what happens when you’re not mentioned?

Here we see the flip side: missed opportunities where your brand isn’t mentioned at all. For practical, conversion-driven queries, like whether luxury fragrance sites offer samples at checkout, the visibility drops to zero, despite these being highly relevant moments in the customer journey. The AI provides a detailed, helpful response, but without referencing the brand, meaning competitors or generic sources are capturing that attention instead.

This is where Prompt Analysis becomes especially actionable: it exposes gaps in your content strategy, helping you identify which topics need better coverage, clearer authority signals, or stronger alignment with how people actually ask questions in AI tools.

Citation Analysis

The AI Citation Analysis tool reveals the exact URLs AI engines cite when answering prompts in your category, third-party publishers, review sites, forums, and competitor domains, each ranked by citation influence score.

Here we can see how Citation Analysis maps the sources shaping AI-generated answers in your category.

While traditional publishers like Vogue and Allure appear prominently, the largest influence comes from a broader mix of domains, including marketplaces like Alibaba, community-driven platforms like Reddit, and niche tools like WhatScent. This makes it clear that AI engines aren’t relying on a single type of authority source, they’re aggregating signals from editorial, commercial, and user-generated content alike. For brands, this changes the playbook: winning visibility isn’t just about PR placements, but about showing up across the full ecosystem of sources AI trusts.

As we drill down to the exact URLs influencing AI responses, ranked by their citation impact, we can see a mix of brand-owned content, retailer pages, and publisher articles contributing to AI answers across topics like luxury makeup and perfume.

Notably, multiple entries from sites like Fragrantica appear repeatedly, reinforcing how certain domains build authority through depth and topical coverage. This level of detail makes Citation Analysis into a practical roadmap: instead of guessing where to build authority, you can identify the specific pages and platforms AI already trusts, and prioritize partnerships, content creation, or optimization efforts accordingly.

Sentiment Analysis

Sentiment Analysis filters all AI brand mentions by positive, neutral, or negative tone, and benchmarks your sentiment score against competitors. A brand can appear in 60% of AI answers and still be losing ground if most of those mentions carry outdated or negative framing. Sentiment catches that before it compounds.

See how Sentiment Analysis breaks down how your brand is being described, not just how often it appears.

In this case, Chanel is in a strong position overall, with 81% positive mentions and zero negative sentiment. Categories like Perfume and Luxury perfume are especially strong, with overwhelmingly positive framing, while areas like Make-up show a more balanced mix of positive and neutral mentions. This kind of breakdown helps distinguish between true brand strength and areas where perception is still forming, highlighting where messaging is landing well versus where it may lack clarity or differentiation.

Now let’s put that sentiment into context by benchmarking against competitors.

While Chanel performs well in categories like Luxury perfume (0.93 sentiment score), competitors such as Dior and Byredo achieve perfect scores in the same space, and Tom Ford leads in Luxury makeup. This shows that even with strong positive sentiment, competitors can still be positioned more favorably by AI. It also surfaces more subtle risks, like Tom Ford’s negative sentiment in Perfume, that indicate how quickly perception can shift.

Together, this view helps you understand not just if you’re being mentioned positively, but whether you’re actually leading the narrative in your category.

How to improve your AI visibility

Improving AI visibility requires two parallel workstreams: on-site optimization (structuring content so AI systems can extract and cite it) and off-site authority building (earning brand mentions on the third-party sources AI engines consistently rely on). A GEO audit is the right starting point for either, as it maps where you currently stand before you invest in fixing anything.

On-site: structure your content for AI extraction

AI engines don’t read articles. They extract content chunks. A page built for narrative flow, building argument, and payoff at the end, is hard for a retrieval system to parse into citable units. Some even say that a page optimized for AI extraction has the answer at the top of every section, definitions made explicit, and each sub-section independently intelligible.

Four content requirements that actually move the needle:

Answer-first H2 openers. Every major section should open with a 30–60 word standalone answer that directly addresses the H2 heading. No warm-up. No, “as we discussed above.” The first sentences of each section are what AI engines extract most reliably.

Explicit definitions. “AI visibility is defined as…” performs better than “AI visibility refers to the way brands appear in…” The phrase “is defined as” is a retrieval signal. Use it every time you introduce a concept that could be the answer to a definition query.

FAQ sections with FAQPage schema. The question-and-answer format is the closest format match to a guaranteed AI retrieval signal. Each FAQ answer should be 50–100 words, self-contained, and answer the question in the first sentence. Apply FAQPage schema markup.

Statistics with full attribution. Adding statistics improves LLM citation rates by up to 41%, according to Princeton’s GEO-Bench research. The format matters: every quantified claim needs a number, the population it applies to, the timeframe, and the source. “AI search is growing” is noise. “Generative AI platforms attracted 8.5 billion average monthly web visits in February 2026, up 76% year over year (Similarweb GenAI Landscape, 2025)” is citable.

Our article about adapting your SEO strategy to support AI visibility growth covers the strategic layer in full, check it out.

Off-site: build the authority ecosystem AI engines trust

85% of brand mentions in AI responses originate from third-party pages, not from the brand’s own website. AI engines learn about your brand from every mention across the web. If your positioning and core claims don’t appear consistently in external publications, review platforms, industry forums, and analyst coverage, AI systems struggle to form a coherent picture of who you are.

Earning coverage in the right external sources matters more than almost anything you do on your own site. Identify the publications, forums, and review platforms that AI engines already cite in your category. Citation Analysis makes this visible directly and builds a systematic presence there.

What drives AI recommendation visibility for websites

Most guides get this wrong. They focus almost entirely on content structure and technical fixes. Those matter, but drivers three and four below move the needle faster than anything you do on-page.

1. Topical authority – Consistent, structured coverage of your category across multiple pages. AI engines are more likely to cite brands that cover a topic comprehensively from multiple angles, definition, comparison, how-to, and metrics, than brands with a single well-optimized page.

Read more – How to Build a Topical Authority System for AEO

2. Entity clarity – AI engines must be able to identify unambiguously what your brand does, for whom, and in which category. If your homepage doesn’t state this clearly, or if your brand is described inconsistently across your owned channels, AI systems will either not surface you or surface you inaccurately.

3. Prompt intent alignment – Your content must match the intent pattern of the prompts users ask AI. Informational prompts (“what is…”) retrieve differently from evaluation prompts (“which is better…”) and purchase prompts (“best [category] for [use case]”). Each requires a different content structure and depth.

4. Third-party corroboration – External sources confirming your brand’s claims, competencies, and positioning. A brand that says it’s a market leader on its own homepage is invisible to AI retrieval in a way that a brand mentioned as a market leader across ten independent publications is not.

5. Citation history – Once cited, AI engines tend to recite the same sources. Early citation establishes a feedback loop that is progressively harder for later entrants to break.

Analyzing your competitors’ AI visibility is the fastest way to reverse-engineer which of these drivers your category leaders are winning on.

AI visibility is now a core growth channel

Moving from ranking to being cited isn’t a change in tactics. It’s a change in what it means to be discoverable. A brand that ranks first in Google but doesn’t appear in AI answers is, from the perspective of a growing share of buyers, invisible at the most influential moment in their decision process.

The brands that move fastest on this tend to be the ones that stop treating AI visibility as a separate workstream and start treating it as the natural extension of everything they’re already doing in SEO and content. The work required, explicit definitions, answer-first sections, stat-dense content, and external authority building, isn’t novel. It’s just more demanding. And the compounding effect of early citation means the cost of waiting is real.

The brands building AI visibility now aren’t just gaining exposure. They’re setting the default answers before their competitors even know the question has changed.

If you want to see where your brand actually stands, you can try Similarweb’s AI Search Intelligence with a free trial.

FAQs

How long does it take to improve AI visibility?

It depends on what you are fixing. On-site changes like restructuring content can influence visibility within weeks, especially for long-tail prompts. Off-site authority building takes longer because AI engines rely on accumulated signals from multiple sources over time. Most teams start seeing measurable movement within one to three months if they are consistent.

Is AI visibility replacing SEO?

No. Similarweb data from Q4 2025 shows 95% of ChatGPT users also use Google. AI search is being added to existing search behavior, not replacing it. The practical implication: brands need both, and treating them as competing priorities means underinvesting in one or the other. SEO is the foundation; AI visibility is the layer on top that determines whether your SEO investment reaches buyers at the moment they form their shortlist.

Can smaller brands compete with established players in AI answers?

Yes, but not by copying traditional SEO playbooks. AI systems often favor clarity, specificity, and relevance over brand size alone. A smaller brand with focused, well-structured content and strong third-party validation in a niche can appear more often than a larger but less specialized competitor.

Do paid ads influence AI visibility?

No, AI-generated answers are not directly influenced by paid search or display campaigns. However, ads can still support visibility indirectly by increasing brand awareness, which may lead to more mentions across the web. Those mentions can later influence how AI systems understand and surface your brand.

How often should you update content for AI visibility?

Regular updates help maintain relevance, especially for topics that change quickly. AI systems prioritize freshness differently depending on the query, but updating key pages every few months with new data, clearer structure, or expanded coverage can improve your chances of being cited consistently.

What is the biggest mistake teams make when starting with AI visibility?

Focusing only on whether they appear in answers, without analyzing how they appear. Visibility without context can be misleading. If your brand is mentioned but framed poorly or always listed behind competitors, the impact is limited. Quality and positioning matter as much as presence.

Wondering what Similarweb can do for your business?

Give it a try or talk to our insights team — don’t worry, it’s free!